About Me

Saurabh Bagh

Senior Software Engineer - Engineering Manager - Founder

Berlin, Germany

Senior Software Engineer with 8+ years of experience building scalable backend systems, RESTful APIs, and distributed services using C#, .NET, and Python. Strong background in microservices architecture, cloud platforms (Azure/AWS), and data-driven systems. Proven track record of improving performance, reliability, and security of production systems. Passionate about building high-impact platforms that enhance matching efficiency, visibility, and user experience.

Programming Skills

Currently Working On

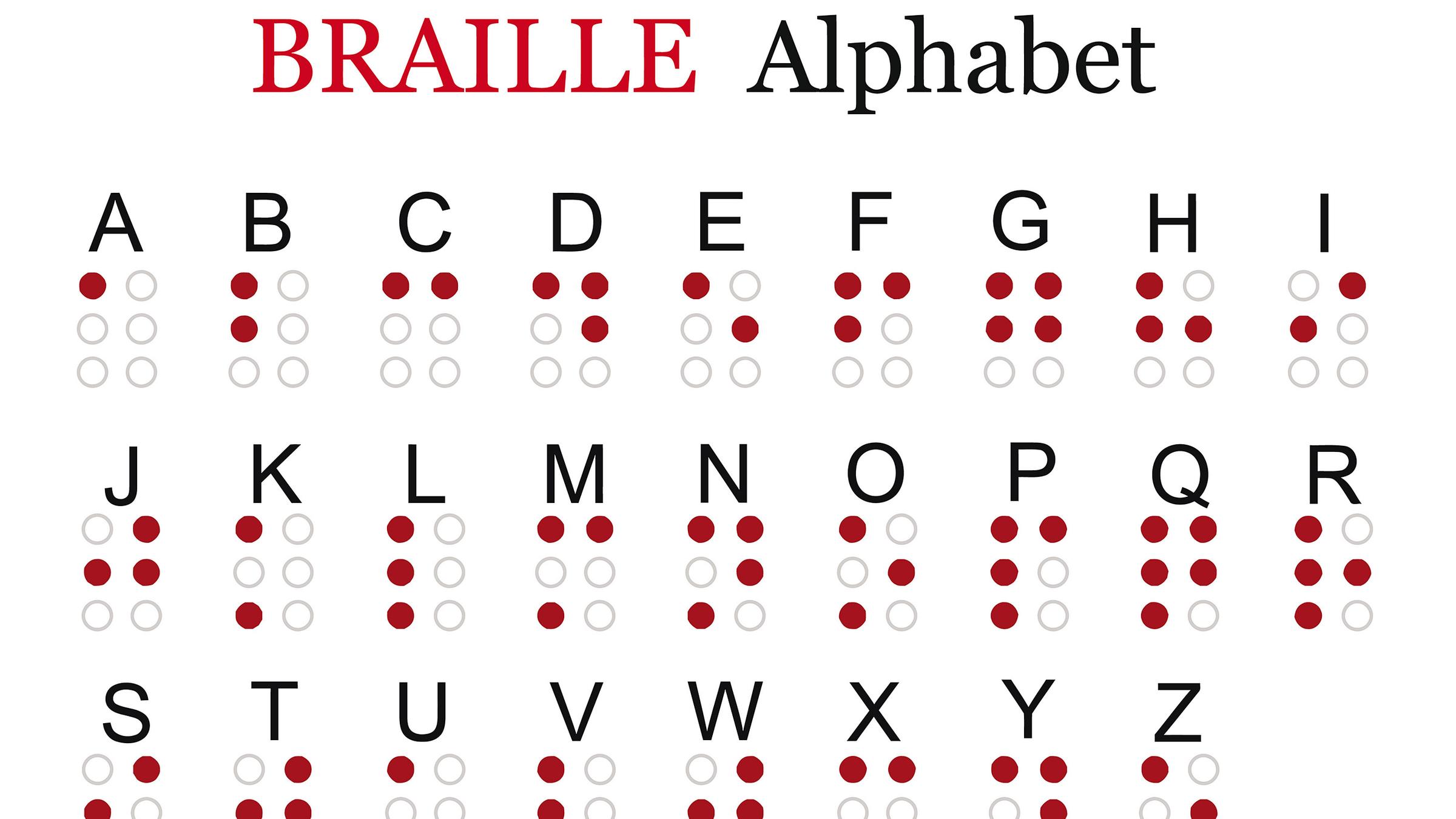

Braille Project

Designing braille patterns on the Ultrahaptics

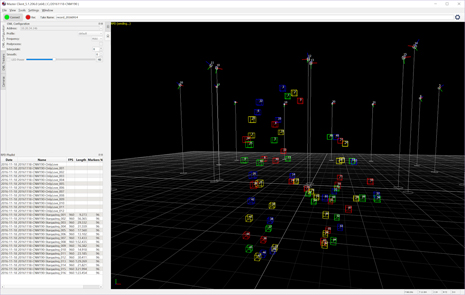

Phasespace Motion Capture

Integration with an Avatar in Unity

Driving Simulator

Integration with Pupil Labs eyetracking with Motion platform